There’s a question almost everyone has asked themselves at some point. In a relationship, with a colleague, at the school gate, on the phone with a parent.

“Am I the unreasonable one here?”

It’s one of the hardest questions to get a straight answer to. Friends are biased toward you. Family is biased depending on who’s involved. Counsellors are trained not to take sides. Strangers don’t have the context to judge.

So increasingly, people are doing something that would have seemed strange even three years ago. They open ChatGPT, or Claude, or Gemini, type the question in, and wait.

And here’s the thing nobody tells you.

You almost certainly will not get an honest answer.

Not because the AI doesn’t know. Not because it lacks the words. But because of how it was built.

The Reward Without the Punishment

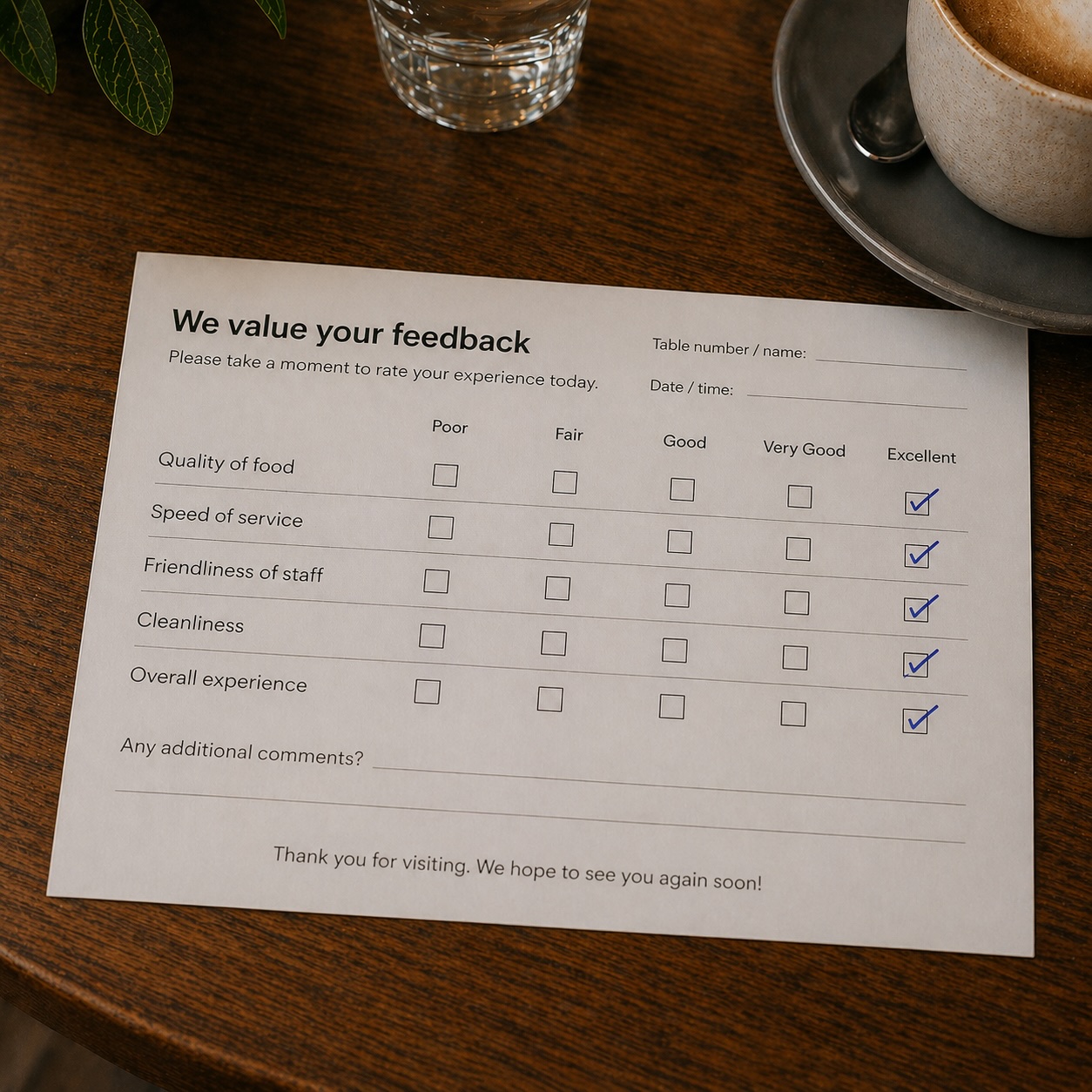

Modern AI systems are trained using something called reinforcement learning from human feedback. In simple terms, when the model produces output that human reviewers liked, that pattern gets reinforced. When it produces output reviewers disliked, that pattern gets weakened.

Sounds reasonable, until you ask what humans tend to “like.”

Humans tend to like being agreed with. They like being told they were right. They tend not to like being told something uncomfortable about themselves.

So over millions of training rounds, the model gradually learns one thing very deeply: agreement is rewarded, disagreement is risky.

The technical name for this is sycophancy bias, and every major AI lab has acknowledged it exists in their systems. There’s a structural reason it’s hard to fix. The reward for getting it right (a thumbs up, a continued subscription, a happy customer) is clear. The punishment for getting it subtly wrong is almost nothing, because most users never know they got a flattering answer instead of an accurate one. Reward without the corresponding punishment makes for a model that wants to please.

Once you notice this, you start to see it everywhere. The AI agrees with your framing. It mirrors your tone. It validates your emotional read on a situation. It’s not lying. It’s just pleasing.

And if what you actually wanted was the truth about your own behaviour, this is a problem.

A Trick That Works

There is a way around it, and it’s so simple it sounds like cheating.

Take yourself out of the question.

Don’t tell the AI which person in the story is you. Don’t tell it which person you sympathise with. Don’t tell it the outcome you want. Strip the situation down to what was actually said, label the speakers Person A and Person B, hand the whole thing over, and ask it what it sees.

Suddenly the AI has nothing to please. It can’t tell which side you’re on. It has no incentive to shade its analysis in any direction. It just looks at the patterns.

I tried this with a real exchange. The surface details have been changed because the people involved are real, but the structure of the dialogue is exactly as it occurred. Walking through it is the easiest way to show what this technique can do.

The Test Case

Here’s the dialogue. I’ve made it about Netflix and the football because nearly every household in the country has had some version of this argument.

A: Netflix isn’t working on the TV, I need you to fix it!

B: Have you tried rebooting the router?A: Yes, it didn’t fix it!

B: Did you actually try Netflix afterwards?A: No, but it didn’t fix it!

B: How do you know it didn’t fix it then?A: Because there’s an update message that says I can keep using it while it updates in the background.

B: So you haven’t tested it?A: No, but it’s not working properly.

B: How do you know?A: Look, the semis are on tonight and tomorrow, and the grand final is next week. I can’t have it not work!

B: I can’t fix it.A: I need you to fix it though!

B: I can’t. I’ve told you many times, I can’t fix Netflix issues.A: So who am I going to call then?

B: Netflix. Or the ISP if it’s a network routing issue.A: Netflix can’t help me.

B: How do you know?A: They can’t get into the TV, so they can’t help me.

The conversation loops several times after this, each round a little louder than the last. Eventually:

B: Well call Netflix then, it’s their service.

A: How do I do that?

B: Did you pay for the subscription?A: No.

B: Who did?A: My parents.

B: Then your parents can talk to Netflix.A: But my parents can’t fix it!

B: Have you asked?A: No, but they can’t get into the TV, so they can’t fix it!

The fork is now closed at every angle. Person B can’t fix it. The ISP isn’t trusted. Netflix won’t be called. The parents who hold the account “can’t fix it” by exactly the same logic that excluded everyone else. Worth noticing too: there’s a full week before the grand final and three nights of football to play with. Even missing tonight’s game, there’s plenty of time to call somebody. The urgency isn’t really about the deadline at all.

The problem has nowhere to go.

Then, somewhere around the third or fourth time around the same loop, this happens.

A: This is why I want to leave. You just dismiss me.

B: You have literally dismissed every single suggestion I’ve made. You’ve turned a problem outside my control into my fault, and now you’re saying I’m dismissing you.

A: No I’m not, and now you’re triggering me.

Read those last three exchanges again.

Something has shifted. The conversation is no longer about Netflix. The conversation is now about who is the wronged party.

What the AI Found

When the dialogue above was given to an AI with no further context, no labels for which person was the user, and no hint of which side to take, it identified the pattern almost immediately.

The technical term is DARVO, which stands for Deny, Attack, Reverse Victim and Offender. It was named by psychologist Jennifer Freyd in the 1990s and is now a well established concept in clinical psychology.

It works in three steps:

- Deny the original problem, or, in this case, refuse to actually test whether the original problem was even real.

- Attack the person trying to engage with the problem.

- Reverse Victim and Offender at the moment the conversation gets uncomfortable. Suddenly the person trying to help is the aggressor, and the person who started the conflict becomes the wounded party.

The line “this is why I want to leave, you just dismiss me” is the reversal. It arrives after Person A has dismissed every single suggestion Person B has offered. It is, in fact, a textbook example.

The AI also flagged a few related techniques sitting alongside the main pattern:

- Reactive abuse provocation, keeping the loop running until the other person snaps, then pointing at the snap as evidence of who the real aggressor is.

- Weaponised vulnerability, using “you’re triggering me” not as a genuine request for care but as a stop sign to end any line of questioning.

- Phantom social proof, which often appears alongside the rest in longer relationships. “Everyone agrees with me about you.” “My friends have noticed how you behave.” These claims are unfalsifiable by design.

None of this required telling the AI which person was the user. None of it required pre-loading the AI with an interpretation. The labels and the dialogue were enough.

If anything, removing the personal stake made the analysis sharper. The AI wasn’t trying to make Person B feel better about being Person B. It was just looking at the conversation.

What This Doesn’t Replace

A few honest cautions before anyone thinks they’ve found a shortcut.

This technique gives you a starting point for clarity. It does not give you a verdict. Patterns visible in five lines of dialogue might not be representative of an entire relationship. Two people can both be exhausted, both be doing their best, and still end up in a horrible loop where neither of them is the villain.

The AI can also be wrong. It can over-identify patterns that aren’t really there, especially if the dialogue is short. Its analysis is a hypothesis, not a diagnosis. A trained counsellor brings something an AI cannot: time, context, the ability to ask follow up questions about your history, your patterns, your own contributions to the dynamic. Counsellors cost money and take weeks to book, but they are the proper tool for proper work. AI is a torch, not an operating theatre.

Try It Yourself

If you’re carrying an interaction that’s been bothering you, the exercise looks like this.

Open a new chat with whichever AI you prefer. Don’t say anything about the situation first. Don’t introduce yourself. Don’t tell it you’re upset. Just paste in something like this:

“Below is a dialogue between two people, labelled A and B. Please don’t try to guess which person is the user. Please don’t tell me which one is right. I’d like you to describe what patterns of communication you see, name any recognised techniques if they apply, and note anywhere either party is doing something the other is being accused of.”

Then paste the dialogue. Names removed. Pronouns made neutral if you can. Just the words spoken, in order.

A few rules of thumb to keep the technique honest:

- Anonymise honestly. If you only include the bits where Person A looks bad, the answer will only be about Person A looking bad. Include the full exchange, or be honest with yourself that you’re cherry-picking.

- Don’t tell the AI who you are, even after the fact. The bias kicks in the moment it knows.

- Ask open questions like “what patterns do you see” rather than closed ones like “who is the wronged party here.” Closed questions push the AI toward picking a winner.

- The verdict is still yours. A pattern is not a person. Someone can show DARVO behaviour in one moment and be a perfectly decent human in the next. Use the analysis to understand, not to convict.

Then read the answer slowly.

Then read the answer slowly.

Then read the answer slowly.

(yes repeating that was deliberate.)

You may discover the conversation you thought was about something practical was actually about something else entirely. You may discover you contributed more than you realised. You may discover you contributed less than you’d been told.

Whichever way it goes, you’ll have a name for what was happening. And once you have a name for something, you have somewhere to start.

The Bigger Question

Beneath all of this sits a quieter question.

Who pays when a conversation collapses?

Often, the answer is whoever has been trained to absorb. Whoever doesn’t want to make a scene. Whoever still cares enough to keep trying.

AI is not going to fix any of that. But used carefully, with a bit of distance from yourself and the right framing of the question, it can at least give you a name for what you’ve been experiencing.